Cloud Data Transformation in Fabric with Medallion Architecture

by Ginger Grant | First published: February 27, 2026 - Last Updated: March 2, 2026

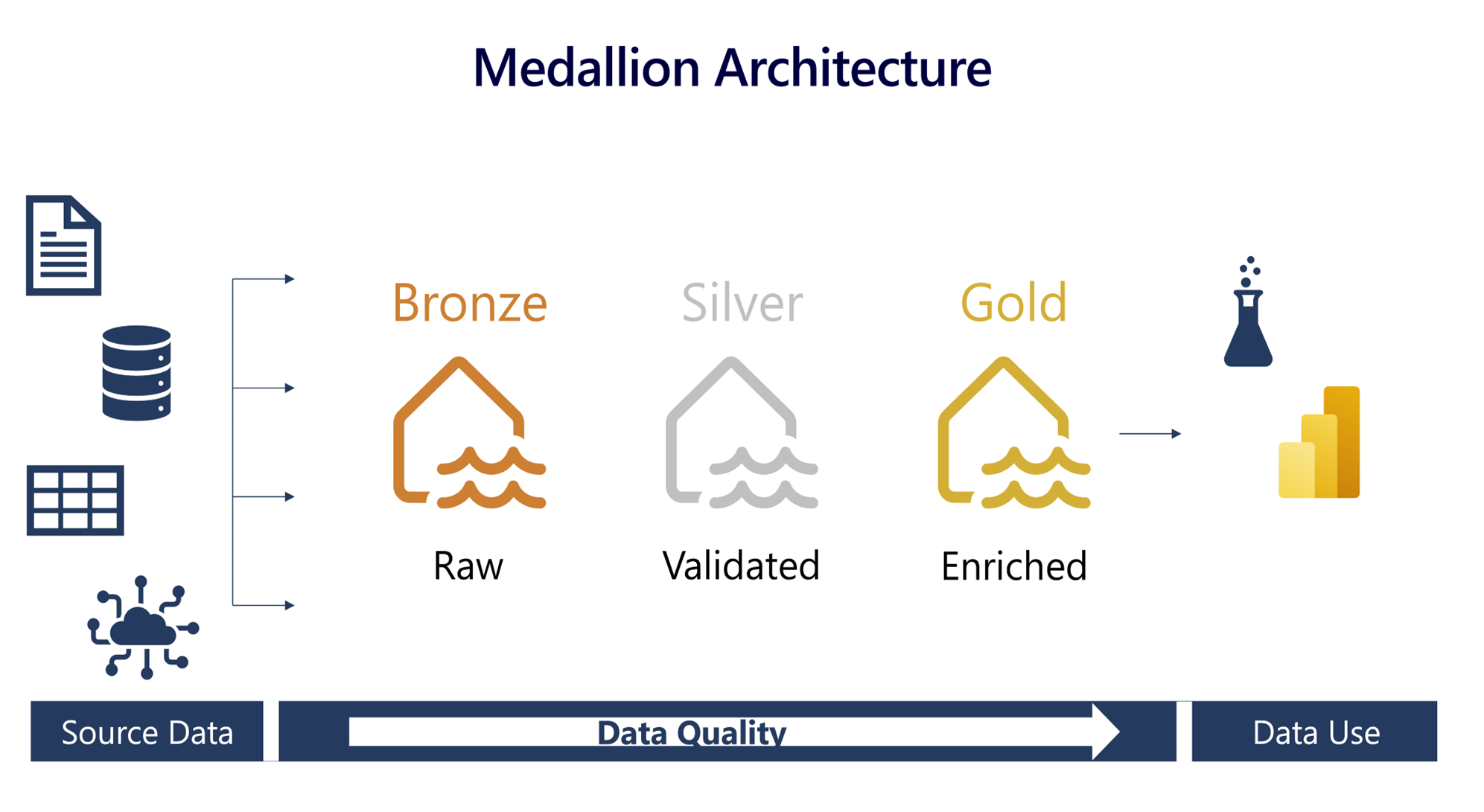

For at least the past 20 years, data engineers have been working on copying and moving data to transform it into an analytical model. The dominant approach was Extract, Transform, and Load (ETL); a process based on rigid, well-ordered, and defined data with expensive data storage and proprietary tools to transform the data. Today, things have changed as it is much cheaper to store data and because of data science and AI there is more desire for raw data and the architecture needs to change with it.

The Brittleness of Legacy ETL

Legacy ETL was intentionally brittle. Data transformation processes were designed assuming they would never break and nobody needed to look at the source data. When something did break, fixing it was very difficult because the source data was in memory during processing and never persisted in the raw state because that saved space. If the process broke before it wrote the data, well, it was lost and the system would need to get it again. The quality of the data received was not evaluated which meant there was effectively no governance. Extensive transformations were applied before the data was loaded, so no one actually reviewed the original source data after the data was loaded.

Medallion architecture is designed to address these failures. It is designed with the thought that space is cheap, so keeping records for troubleshooting, analysis and governance is encouraged.

Bronze Layer

The first step is retrieving and saving the data in its original source format. This first layer is commonly known as the bronze layer, but that name can vary. I’ve seen it called everything from Raw to One. The name is not as important as the process. By saving data in its raw state, organizations preserve the ability to use data in its raw form, which is essential for data science and AI tasks, including training large language models (LLMs). The raw data can also be evaluated to ensure the quality did not change over time, and data owners can then be held accountable for the data they send. Because the data stored within the bronze layer is not modified from the source data in any way, there is little chance of anything failing because at its core, the activity is copying the data.

Ensuring Robust Development Through Testing

When implementing medallion architecture using Fabric, both the code to extract the data and the data itself are stored in a workspace. The workspace should contain all the data designated for a specific data warehouse. For security purposes, data which contains personally identifiable information (PII) or is considered highly confidential may be isolated in a separate workspace to decrease access to sensitive raw data. As all processes need to be tested, there will need to be workspaces designated for raw data for development, test, and production. It is important to ensure that nothing goes into production which is not tested and that includes the data-gathering processes themselves. Do not skip the test workspace as this is where the data team will test the migration process and confirm that nothing is missed in the transition from development to production.

Data Access in the Bronze Layer

Data scientists, machine learning algorithms, and large language models may need access to the unstructured data found in the bronze layer. They need raw data to be able to provide the greatest insight. They do not want or need pre-built analytic models. Your IT team must configure appropriate security for these groups to be able to access the data. In Fabric, you can share lakehouses or data warehouses without granting access to the entire workspace. Sharing at the data level is preferable to granting workspace access, because workspace access also exposes the underlying extraction processes and code.

Silver Layer

Once you have the data, work in the silver layer will validate the data quality and transform it. This can include things like assigning data types to raw data, validating that the number of columns received meets the specifications and determining what to do if the data is not of sufficient quality to load for analysis.

Data Access in the Silver Layer

Validation was one of the steps frequently ignored in legacy ETL pipelines but is a vital part of the medallion approach. Once the data is validated, then there is little chance that there will be issues in the analytical modeling step. Generally speaking, business users do not need access to the silver layer, as data engineers use it for development and testing.

Gold Layer

The gold layer contains only analysis-ready, curated data that the organization will consume. Security needs to be applied to the data to meet access requirements. Semantic models for reporting are created here as well as the key performance indicators (KPIs) and other measurements needed to support business decisions. Because this layer is designed to support the data organization, you should collaborate with the end users and stakeholders to ensure that all required data elements are included in the warehouse.

Data Access in the Gold Layer

Data created for the gold layer will live in a workspace where many people need access. The groups of users are different than the data engineers who created the data store. Business analysts may be tasked to create the semantic model and add it to the workspace. Data elements in the workspace are shared with other Fabric users, who interact with the data subject to the security rules applied at the layer.

Conclusion: Why the Structure Is Worth It

Medallion architecture applies a different set of processes to achieve the same goal as ETL: developing a robust enterprise data source for analysis and reporting. The structure is not arbitrary. Each layer exists to solve problems that plagued earlier ETL designs including lost data, poor governance, brittle pipelines, and untestable transformations. The layers in medallion architecture give organizations a data platform that is easier to maintain, debug and extend. This layered approach ensures that data remains trustworthy, scalable, and fully aligned with the modern engineering patterns that Microsoft Fabric is built to support.