Delivering applications and services that are highly available is expensive. While WCF makes it possible to develop applications that can be deployed in a flexible manner to achieve various levels of availability and scale, it can be difficult to predict (and budget for) the appropriate level of availability given the not-so-predictable needs of the business and consumers. Windows Azure makes it possible to deploy WCF applications and services that theoretically can deliver unlimited levels of availability and scalability and be adjusted to the dynamic needs of an application’s user population. In this article, I'll explore how easy it is to develop, configure and deploy a .NET 3.5 Windows Communication Foundation service application for the Microsoft Windows Azure operating system.

Understanding Windows Azure from 10,000 Feet

Estimating and provisioning infrastructure is expensive because it is difficult to predict the elastic needs of an application over time. Even when engaging experts to conduct capacity planning, the needs of the business can expand and contract abruptly as demands for products and service are often elastic. The result is that often times, companies are faced with the choice to either go small or go big on their infrastructure purchases. The former introduces the very real risk of service delivery failure, and the latter can be prohibitively expensive. Many companies typically land somewhere in the middle, which means a never ending procurement process and ballooning expenses with server after server seemingly disappearing into a black hole. This is not only a drain on operating budgets from a hardware perspective, but in addition, provisioning and deploying servers is time consuming and expensive and detracts from the ability to deploy resources to more tangible revenue generating activities. Not to mention the cost of maintaining this ever-growing number of machines grows exponentially with each new machine.

Imagine if you could architect, design and develop applications using the .NET skills you already have today and deliver your application to users at Internet-scale with just a few clicks. Rather than calculating the specific number of Web, application and database servers required to meet your customer’s needs today, tomorrow and during peak times (and hoping that you are not wrong); what if you could defer these decisions to the appropriate time, and yet guarantee scalability, reliability, performance and reliable failover?

Estimating and provisioning infrastructure is expensive because it is difficult to predict the elastic needs of an application over time.

In addition, what if you could provide your customers with a pay-as-you-go model in which they only pay for what they use, whether they are a small start up just getting started or an existing company launching a marketing campaign during the Super Bowl?

Now, imagine all of these benefits without ever having to worry about keeping up with security updates, patching servers, running backups or testing your failover or disaster recovery strategy.

Windows Azure is a cloud services operating system that is the bedrock of Microsoft’s Azure Services Platform and provides all of these capabilities and much more.

In addition to providing an operating system for the cloud, the Azure Services Platform includes .NET Services, SQL Services and Live Services technologies that enrich the development experience and reach of applications deployed in Windows Azure, in the enterprise, or a combination of the two.

In this article, I will focus on the Windows Azure cloud operating system which provides a development, run-time, and management environment that supports the ability to develop, deploy and run your applications at Internet-scale.

Windows Azure is comprised of two main services, a controller, and of course, a configuration system. I will cover each of these components next.

Note: Windows Azure is currently a technical preview technology. As such, much of what I present here is based on very early documentation and what I’ve learned on my own by using the technology preview. Don’t take anything I say here as the final word. As this goes to print, there may very well be new and improved ways of working with Windows Azure that I simply haven’t yet discovered.

The Compute Service

The Compute Service is a logical container for any number of Windows Server 2008 x64 virtual machines that host your application. The Compute Service is fronted by load balancers which ensure that requests for your application are always routed to an available virtual machine instance provided multiple instances have been configured.

The Compute Service is partitioned into two roles: Web Role and Worker Role. Each role has affinity to a virtual machine; however, an application in each role is never aware of what physical machine or logical virtual machine it is running on. This is key to how Azure scales and is yet another example of ever increasing levels of indirection required to solve the new challenges that modern computing introduces in new problem domains.

When designing an application for Windows Azure, your application must target either the Web Role, Worker Role or both. The distinction between the two is that an application designed for the Web Role runs IIS 7 and can accept HTTP/HTTPS requests from outside of the cloud (i.e., your desktop or your customer’s server in their own data center). A Worker Role can only accept input from another application inside of Windows Azure and accepts requests via Queue Storage. This really equates to the same way you might think about an application server in a traditional data center which may run a series of WCF, WF, BizTalk or Windows NT Services to do asynchronous heavy lifting in the middle tier.

Of course, you may chose to design your logical application taking a layered approach which includes both roles and this is an excellent pattern if you want to support background asynchronous work that does not consume resources in the Web Role application. In this article, I will focus on synchronous operations solely provided by the Web Role.

The Storage Service

The Storage Service provides three key storage options: Table Storage, Blob Storage and Queue Storage. Table Storage allows developers to model their conceptual schema using .NET entities without regard to the underlying logical storage technology. The Table Storage service is exposed using REST via an HTTP/HTTPS endpoint which provides the ability to insert, update, delete and read records within tables deployed to Table Storage.

Blob Storage allows you to store data in binary form, such as images, files or trees, and Queue Storage provides queue-based messaging between applications and roles.

In this article I’ll focus on Table Storage. As you will see later, if you are familiar with the Entity Framework, Table Storage should feel very familiar to you.

Azure Table Storage

As with all storage features in Azure Storage, Table Storage is an extremely scalable and reliable mechanism for storing the state of your applications and services. I won’t go into too much detail (for more information on Table Storage architecture see the sidebar “Azure Services Platform Whitepapers”) about Table Storage, but for now it will be helpful to think about Table Storage in a hierarchical form consisting of an account, tables, entities and properties.

When Microsoft provisions Table Storage, it assigns you an account. This account is the root of the hierarchy and consists of tables. Note that Table Storage has nothing to do with SQL Server. It is based on a different, highly replicated non-relational storage technology. With that clarification out of the way, a table composes one or more entities. An entity is analogous to a row in RDBMS terms, and each entity consists of properties. A property holds a value of a given value type and is analogous to a column. Remember, however, that Azure Table Storage is very different from RDBMs like SQL Server, so thinking of entities as property bags of key/value pairs instead of holding on to old metaphors is more accurate.

Some of the supported data types include:

- Binary (a byte array)

- Bool

- DateTime (a UTC timestamp)

- Double (64-bit floating point value)

- GUID

- Int (32-bit integer)

- Int64 (64-bit integer)

- String (which uses the UTC-16 encoding)

The Binary and String data types have an upper limit of 64 KB. This is for scalability and performance reasons (remember, normalization doesn’t really apply here).

Tables are partitioned to aid in scalability and performance. I’ll cover partitions in more detail later, but the idea is that you want to keep similar data physically together and thinking about partitioning ensures that your queries and transactions run as efficiently as possible given the manner in which the entities may be distributed to meet scalability and reliability goals that are central features in Table Storage.

Development Storage

For simulating the Compute Service, the Windows Azure SDK includes a Storage Service emulator intended for local development and testing called Development Storage. Development Storage provides a development experience that is very close to working with Azure Table Storage at the API level. As you will learn, the provisioning of storage and tables is different in Development Storage and Azure Storage.

The Fabric Controller

As I mentioned earlier, the affinity between applications and roles is an important part of the scalability story, and the Fabric Controller provides a nexus that binds the virtual machines in a data center together and addresses cross-cutting concerns such as monitoring and logging via role-specific agents that run on each virtual machine.

The Fabric Controller manages the creation and disposal of Virtual Machines which are Windows Server 2008 x64 machines running on Windows Hypervisor technology. Based on configured settings, the Fabric Controller will spin up new VMs as needed. In addition, the Fabric Controller is responsible for monitoring switches and load balancers in addition to the physical and virtual machines. When allocating VMs, it does so intelligently. For example, if a Web Role application is configured for four instances, it will allocate and deploy the VMs such that a hardware failure, say of one load balancer, doesn’t bring the entire application down.

An important component that provides the Fabric with real-time monitoring information is the Azure Agent, which is deployed on each VM. The agent provides the Fabric Controller with health and status information so that it is always aware of the status of the physical and logical components under its control.

As of today, it is reported within authoritative sources (see the “Azure Services Platform Whitepapers” sidebar) that each virtual machine is isomorphic to a processor core, which is pretty impressive. This is where you start to really appreciate the scale we are talking about here. For example, there is a video on You Tube (see sidebar “Massive Scale”) of a reporter doing an on-site interview at one of the many Microsoft Azure data centers literally scattered around the world. This facility alone has 10 server rooms, each with a capacity to hold 30,000 physical servers. Doing some quick math suggests that if each physical machine contains 4 cores (which is probably conservative) an Azure data center can host 1,200,000 Virtual Machines. That is one million, two-hundred thousand VMs folks.

I have it on good accord that if a component in a server fails, be it memory, a disk in a raid array or a network card, the machine is put into a failed state, replaced immediately by a standby machine and the faulted machine is pulled from the rack.

If you are starting to appreciate the true scale of Windows Azure, you are not alone. As with most new technologies I get involved with, what usually gets me interested is that “oh wow” moment. If you are having such a moment and want to learn more about how these data centers work, take a look at the sidebar “Generation 4 Modular Data Centers.” It is truly fascinating.

The Local Development Fabric

As with Development Storage, the Windows Azure SDK includes a local development emulator called the “Development Fabric” which allows you to build, deploy and test your applications for Windows Azure in your local development environment. You might think of the Development Fabric as the Azure equivalent of Cassini, which provides an Azure hosting and debugging experience, only instead of mimicking IIS, it emulates Azure.

Pricing & Guarantees

Instead of worrying about provisioning, deploying and maintaining costly hardware infrastructure, Microsoft is making its tremendous investments in infrastructure available as a commodity service to businesses and entrepreneurs. These investments aren’t only in the almost unfathomable data centers with hot swappable computing clusters, but also in the expertise of infrastructure engineers who have dedicated entire careers to ensuring that Microsoft’s Web properties are almost always available.

Currently Windows Azure is in the CTP phase and is offering developers and organizations the ability to assess the Azure offering free of charge.

On July 14, 2009, Microsoft made public its concrete Service Level Agreements (SLAs) for Azure Storage and Azure Compute services:

- For Azure Storage, Microsoft guarantees that Azure Storage will process correctly formatted requests that are received to add, update, read and delete data 99.9% of the time

- For Compute Services, Microsoft guarantees that when you deploy two or more role instances in different fault and upgrade domains, your Internet-facing roles will have external connectivity at least 99.95% of the time.

In addition, Microsoft has published a consumption-based pricing model based on the number of and type of roles used, CPU time, storage size, bandwidth and transactional throughput. For more information, please see the Azure Services Platform FAQ at: http://www.microsoft.com/azure/faq.mspx">http://www.microsoft.com/azure/faq.mspx#pricing

Note: The content covered in this article is based on the May 2009 CTP and is subject to change. As with any pre-RTM technology, developing with a pre-released version of the software should be considered as educational and to prove the technology’s viability within your problem domain.

If each physical machine contains 4 cores and Azure data center can host 1,200,000 Virtual Machines!

Exploring the Green Fee Broker Sample Application

Green Fee Broker is a fictitious software + services cloud product which allows golfers to play their favorite courses at the lowest possible rate. Golfers install “My Green Fee Broker” on their machine which runs in the toolbar. A golfer can click the “My Green Fee Broker” icon, pick from a list of participating golf courses, and subscribe to alerts when a registered course publishes an available tee time. If interested in submitting a booking offer, the golfer can either submit an offer via My Green Fee Broker running on their desktop, or login to Green Fee Broker Online, a Web property that provides the ability to submit a booking request to the Green Fee Broker Service from any PC. From there, Green Fee Broker goes to work to search for the lowest possible rate available, books the course, and notifies the golfer of his or her tee time via a desktop alert and/or an e-mail notification.

Green Fee Broker caters to two key personas.

The Savvy Golfer

The golfer is a Green Fee Broker customer who is interested in finding the lowest possible rate at a list of participating golf courses. She is willing to be flexible around which course is booked, as long as it is in a list of courses that she is willing to play. She enjoys the fact that she has a great chance to get the lowest possible rate by simply submitting a request to the Green Fee Broker Service, which is always available in the cloud.

The Enterprising Golf Professional

The golf course pro is responsible for meeting revenue targets at the golf course for which he is responsible. As a seasonal business, off-season quarters can be un-predictable, and as such, the pro is always looking for ways to incent golfers to play his course. Even in-season, unfilled tee-times can be the difference between profitability and merely meeting payroll.

A day does not go by when there are not a number of open tee-times or last minute cancellations, and Green Fee Broker helps to maximize occupancy of these timeslots in a proactive manner. To a golf professional, Green Fee Broker is a service that drives traffic to his golf course which is registered with Green Fee Broker. Given Green Fee Broker’s active Internet campaign, the pro’s golf course is featured in banner ads across popular golf Web properties that attract golfers to browse a list of participating courses, register for the Green Fee Broker service and download the on-premise Green Fee Broker application.

The great thing about the Green Fee Broker Service is that it is always available and exposes an API that can be consumed either by the My Green Fee Broker application, Green Fee Broker online, or branded Web properties for specific golf courses that can use the Green Fee Broker Service API to submit a booking request on-demand or in response to a desktop alert.

The Green Fee Broker product is perfect for deployment to the cloud because at its core is a brokering engine that is exposed to any number of consumers who leverage the API.

As with any business venture, the success of this potential software + services product is unknown. However by leveraging Windows Azure, I can start prototyping the application and making it available incrementally for public use without worrying about start up costs such as infrastructure or maintenance. Since I will only pay for the use of the application, I get great flexibility and the ability to defer expensive decisions, which allows me to focus on building the product.

The Green Fee Broker Service

In this article, I will explore a slice of functionality including exposing the ability to register a golf course, as well as the ability to retrieve currently registered courses via the Green Fee Broker Service. The Green Fee Broker Service is a WCF application that exposes two operations, RegisterCourse and GetCourses:

- RegisterCourse allows a golf pro to register his course with Green Fee Broker so that his course is provided in the list of available courses. Eventually, registered courses will be eligible to post available tee times and respond to booking requests from savvy golfers.

- GetCourses allows savvy golfers to request a list of golf courses that are registered with the Green Fee Broker Service.

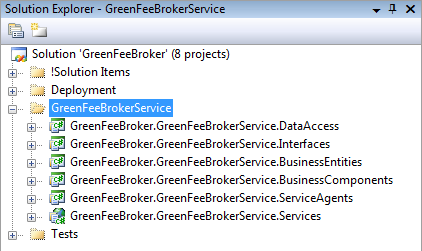

The Green Fee Broker Service application consists of the following projects/assemblies as seen in Figure 1:

- GreenFeeBroker.GreenFeeBrokerService.Services

- GreenFeeBroker.GreenFeeBrokerService.BusinessEntities

- GreenFeeBroker.GreenFeeBrokerService.Interfaces

- GreenFeeBroker.GreenFeeBrokerService.BusinessComponents

- GreenFeeBroker.GreenFeeBrokerService.ServiceAgents

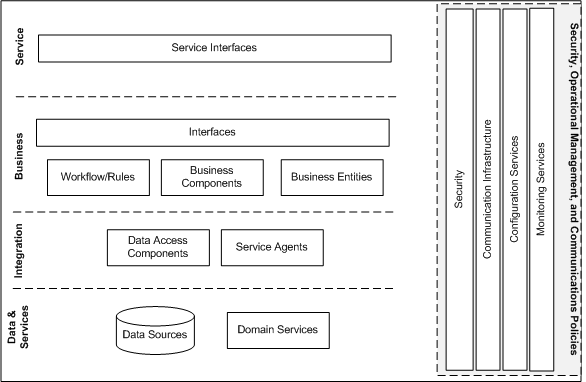

Hopefully, the purpose of each project is self explanatory, and you will notice that the names of each assembly map quite logically to the layer diagram in Figure 2. The .Services project consists of a Service Contract (“IBrokerService”), a class called “BrokerService” that implements the Service Contract and some configuration for hosting the service. Speaking of configuration, this service exposes a single endpoint over HTTP using the BasicHTTPBinding. Note that this is a plain vanilla WCF service. As you will see, nothing about the service will change in order for us to deploy it to Windows Azure-the familiar WCF mirepoix of address, binding and contract still applies.

The Business Entities project contains a class called “Course” which is a model for a golf course. If you look at the code for the Course class, as seen in Listing 1, you will see that it inherits from a base type called TableStorageEntity. In addition, you will see a second class called “GreenFeeBrokerDataServiceContext” as shown in Listing 2. If you are familiar with the ADO.NET Entity Framework and ADO.NET Data Services you are likely feeling quite at home. If not, suffice it to say that these types represent an Azure Table Storage entity and corresponding context for working with Azure Table Storage entities. Don’t worry, this will all make sense soon.

The Interfaces project contains interfaces that are implemented by the Business Components and Service Agents and the Business Components project contains a class called CourseManager, which contains some basic business logic and delegates to the Integration Layer.

Nothing about the service will change in order for us to deploy it to Windows Azure-the familiar WCF mirepoix of address, binding and contract still applies.

Last but not least, the Service Agent project contains a class called “TableStorageServiceAgent” which encapsulates the communication details of querying and issuing transactions to the Azure Table Storage service. If the Service Agent or Service Gateway pattern is new to you, see my article in the January/February 2008 issue of CODE Magazine (http://www.code-magazine.com/article.aspx?quickid=0801071&">http://www.code-magazine.com/article.aspx?quickid=0801071&;page=5).

You can see the code listing for the service agent in Listing 3. Again, if the code doesn’t make much sense at this point, don’t worry, it will soon.

Configuring GreenFeeBroker for Windows Azure

Aside from some interesting base types in the Course entity, the GreenFeeBrokerDataServiceContext class and some API calls you may not have seen before in the TableStorageServiceAgent, the Green Fee Broker Service application is a pretty straightforward .NET 3.5 application.

The beauty of Windows Azure is that it allows developers to work with the technologies they already know. Aside from some design considerations such as designing applications that are stateless (always a good practice for scalability if you can pull it off) and the integration of new technologies for dealing with storage, the development paradigm remains very much the same as any traditional .NET application.

So, how do you take a plain ol’ .NET 3.5 application and prepare it for Windows Azure? Let’s find out!

Creating the Cloud Service Project

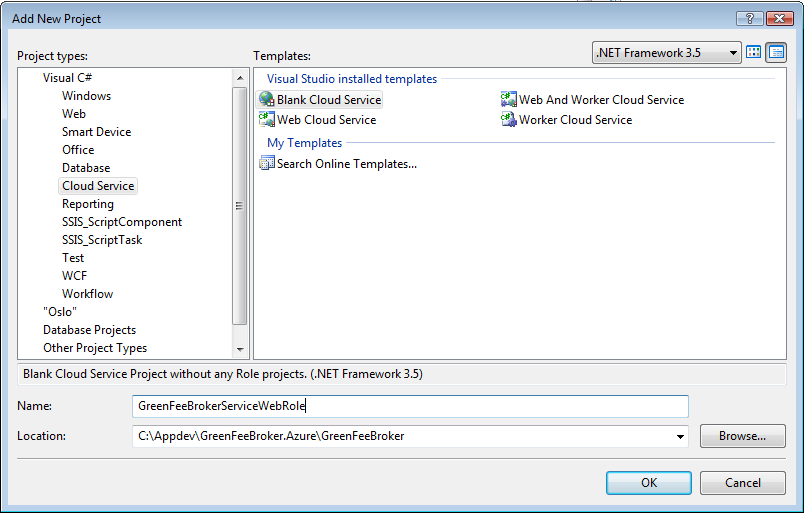

After installing the Windows Azure SDK, you will have a number of tools and utilities at your disposal for getting up to speed with Windows Azure including the Development Fabric, Development Storage, the DevTableGen utility and Visual Studio templates for Windows Azure. I will cover all of these tools shortly, but first, let’s create a Cloud Service Project. The best explanation I can give for a Cloud Service Project is that it is a configuration container for preparing an application for testing locally in the Development Fabric, and deployment to Windows Azure in the cloud. To this end, it is somewhat analogous to a Windows Installer project, but for the cloud.

From within Visual Studio 2008, go to File, New Project. In the current CTP, a project type called “Cloud Service” is available. In my installation of Visual Studio, it is about half way down from the top. Next, select the “Blank Cloud Service” project template and provide a name. In this case, I called it “GreenFeeBrokerServiceWebRole” to denote that the project is a configuration container for the Green Fee Broker Service application which will be configured and deployed as a Web Role. Figure 3 shows what the Add New Project dialog box should look like at this point.

Recall that Windows Azure supports two main roles, Web and Worker. Since each role has some differences in configuration, you must tell the Cloud Service Project what kind of role you are configuring.

CTP Hack Alert!

One of the fun (OK sometimes frustrating) things about playing with CTP bits is that sometimes things either don’t work quite as intuitively as you’d like, or alternate use cases or scenarios just haven’t been implemented yet. Just like my VW bug in college that required me to hold the door handle from the inside and give the door a good whack just below the lock to get it to open, if you want to configure an existing application for a role (as we do), you have to look at and modify some XML. I had been going around and around trying to find an elegant solution to this, and just as I was about to give up, I ran across a blog post by Jim Nakashima, a Program Manager on the Azure team who held the missing link (http://blogs.msdn.com/jnak/archive/2009/02/05/using-an-existing-asp-net-web-application-as-a-windows-azure-web-role.aspx">http://blogs.msdn.com/jnak/archive/2009/02/05/using-an-existing-asp-net-web-application-as-a-windows-azure-web-role.aspx) for configuring an existing WCF Web Application to support a Web Role within a Cloud Service project:

- Right-click the GreenFeeBroker.GreenFeeBrokerService.Services project and click Unload Project.

- Next, right-click the GreenFeeBroker.GreenFeeBrokerService.Services project again and select Edit GreenFeeBroker.GreenFeeBrokerService.Services.csproj.

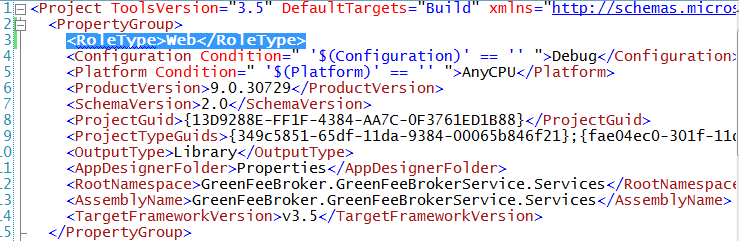

- As shown in Figure 4, add a RoleType element with a value of Web to the PropertyGroup element. Save the GreenFeeBroker.GreenFeeBrokerService.Services.csproj file.

- Right-click the GreenFeeBroker.GreenFeeBrokerService.Services file and click Reload Project.

At this point, I have hacked the plain ol’ WCF Web Application project to be eligible as a Web Role. As an alternative you could create a new Web Role project, relocate all of your artifacts to the new project and delete the old one.

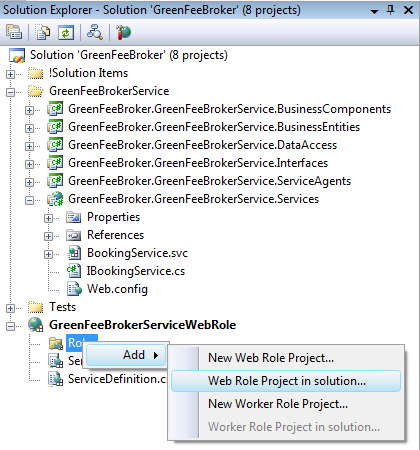

At this point, right-click the Roles folder in the GreenFeeBrokerServiceWebRole Cloud Service project, select Add and select Web Role Project in solution as shown in Figure 5.

The Associate with Role Project dialog box appears. Select the GreenFeeBroker.GreenFeeBrokerService.Services project, which should be the only one in the list and click OK.

Verifying Configuration Files

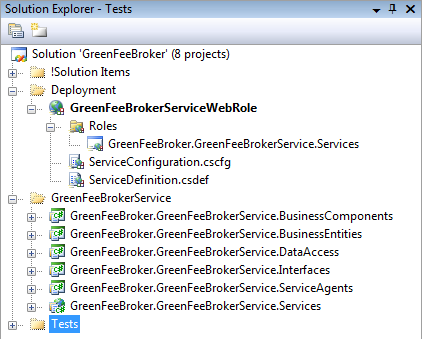

At this point the solution resembles Figure 6. Note the use of solution folders to keep the solution nice and tidy. You will notice three new artifacts. The GreenFeeBroker.GreenFeeBrokerService.Services link under Roles, a service configuration file aptly called ServiceConfiguration.cscfg and a service definition file called ServiceDefinition.csdef. Listings 4 and 5 contain the configuration within each of the files. I am not going to go into detail here, but from the contents, it is evident that the service configuration file denotes that the application is intended to be deployed to the Web Role, and the default number of instances that the Fabric Controller will allocate should be 1. The service definition defines an input endpoint that accepts requests on HTTP port 80. Note that this could be changed to HTTPS/443 if SSL is required, but only HTTP/80 is supported in the Development Fabric.

Programming Tables for Table Storage (Making the Sausage)

Mark Twain once suggested that there are two things man should never see: the making of sausage, and the passing of law.

While I love the idea of being able to simulate the Azure Storage Service locally, I find the process of configuring Table Storage a bit quirky. First, remember as I noted earlier that Azure Storage-be it Table, Queue or Blob storage-has nothing to do with SQL Server. However, in order to emulate Azure Storage in Development Storage, SQL Server 2005 or 2008 or later is a requirement. At first this seems pretty straight forward, and I think the rationale makes sense, but getting Table Storage up and running both in Development Storage and Azure Storage can be a bit confusing.

Here’s the basic idea. Table Storage is exposed via a service that conforms to the ADO.NET Data Services API. This means that interaction with the underlying tables or entities is done via REST, and the ADO.NET Services Client Library stack hides much of the details, which is great for productivity. Many will naturally say that this is a great fit for REST because the tables and entities in Table Storage can simply be thought of as resources that Web/Worker Roles in the cloud or any on-premise application can consume. I will admit that as a SOAP guy, the idea of using HTTP to manually create resources via code (i.e., tables) is still a bit foreign to me, but with the help of my friends (you know who you are), I am proud to say that I am on the road to recovery.

Anyway, one way or another, you need to create the tables that will store your entities. The mechanics of creating the underlying storage for your tables is vastly different when using the local Development Storage versus the actual Azure Table Storage but under the covers, the process is very similar as you will soon learn.

Creating Tables for the Development Storage Table Storage Service

There are at least two approaches for creating a table in the local Development Storage. One involves Visual Studio, and the other requires the use of a utility that ships with the Azure SDK called DevTableGen.exe. The former approach is very simple. You simply right-click on the Cloud Service project (in this case GreenFeeBrokerServiceWebRole) and select Create Test Storage Tables.

Here is where the magic sausage making process begins…

Recall the GreenFeeBrokerDataServiceContext and Course classes from Listing 2 and Listing 3. The Course class inherits from the TableStorageEntity class that ships with the Azure Storage Client, which is provided as part of the Azure SDK. The TableStorageEntity class simply provides some boilerplate properties that are required for Azure Table Storage entities. These properties are TimeStamp, PartitionKey and RowKey. Recall in our brief discussion about Azure Table Storage that tables are partitioned. The way a table is portioned is by using a string, known as the PartitonKey. Each entity in Azure Table Storage is uniquely identified using a combination of the partition key and the row key. The constructor for the Course entity accepts the partition key and row key. This will allow us to inject the appropriate values when we instantiate a new TableStorage entity.

In my case, I am somewhat naively using a generic partition called Courses as my partition key. Notice that each course consists of a ZipCode property. This property might make for a good partition key candidate because it would help ensure that golf courses are grouped by zip code when they are physically stored. There are, however, some drawbacks to this approach, and partitioning is a big topic unto itself, so for simplicity, I’ve chosen an arbitrary partition key called Courses.

Aside from partitioning, and the role of the PartitionKey and RowKey in uniquely identifying a Table Storage entity, the Course entity acts as a template for creating the Table Storage table, both locally and in Azure.

When you run Create Test Storage Tables against the Cloud Service project, it reflects over the assembly corresponding to the Web Role application (in this case, GreenFeeBroker.GreenFeeBrokerService.Services.dll) and looks for a type that both inherits from System.Data.Services.Client.DataServiceContext and includes a property of type IQueryable. If you look closely at the GreenFeeBrokerDataServiceContext class in Listing 3, you will see that it meets both of these requirements. GreenFeeBrokerDataServiceContext inherits from TableStorageDataServiceContext (included in the StorageClient.dll that is provided as part of the Azure SDK). TableStorageDataServiceContext in turn inherits from System.Data.Services.Client.DataServiceContext.DataServiceContext, which provides the ADO.NET Data Services infrastructure for communicating with the Table Storage service.

The Compute Service is partitioned into two roles: Web Role and Worker Role.

When Create Test Storage Tables completes, provided at a minimum you have SQL Server Express installed and have permission to the default instance (.\SQLExpress), Visual Studio will create a new database called GreenFeeBrokerServiceWebRole with a table called Courses. This is by far the simplest way to accomplish the creation of the tables for Development Storage (provided of course you have created your TableStorageEntity template and TableStorageDataServiceContext class), but you are stuck with the default of the name of the database being equal to the project. Since my actual Table Storage account will not be GreenFeeBrokerServiceWebRole, I prefer to have more control over the naming to maintain as much fidelity as possible between local development and Azure Storage.

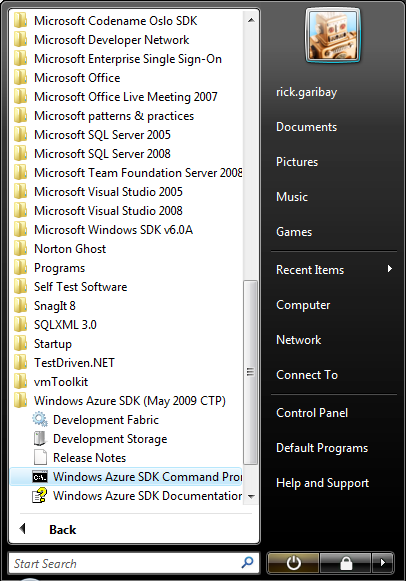

If you are like me, then DevTableGen.exe is for you. This utility ships with the Azure SDK and gives you a little more control over the database server and database name. The easiest way to use the tool is to fire up the Windows Azure SDK Environment command prompt located in the Windows Azure SDK program group as shown in Figure 7. With the command prompt open, navigate to the bin directory of the GreenFeeBroker.GreenFeeBrokerService.Services project that is linked as a Web Role to the GreenFeeBrokerServiceWebRole Cloud Service project. DevTableGen.exe accepts a semicolon separated list of assembly names, a /database switch for passing in the name of the database, and an optional /server and /forcecreate switch. If you omit the /server switch, the tool will attempt to create the database and table(s) in the SQLExpress instance. If the database already exists, you can use the /forcecreate switch to drop and recreate the database and tables (note that all of your entities/rows and corresponding properties/columns will be lost).

If you are familiar with the Entity Framework, Table Storage should feel very familiar to you.

The code snippet below provides an example of running the DevTableGen.exe tool with a single assembly, /database switch and the /forcecreate option:

DevTableGen.exe

GreenFeeBroker.GreenFeeBrokerService.Services.dll

/database:GreenFeeBroker /forcecreate

At this point, the Course table is allocated for Development Storage in a SQL Server database called GreenFeeBroker.

Creating Tables for the Azure Storage Table Storage Service

I think that choosing to use SQL Server for allocating Development Storage makes a lot of sense because developers already know SQL Server and it is likely already available in a development environment which makes for one less component to download, install, configure and learn. Often, the best way to learn new things is to understand how they compare with existing knowledge. So, while SQL Server has nothing to do with Azure Table Storage, all developers are comfortable with SQL Server and it makes sense as a local development store.

What can be a bit confusing is that the process for actually allocating Table Storage in Azure Storage is completely different. Allocating Table Storage requires the creation of a Table Storage account and programmatically creating the table(s).

To create a Table Storage account in Windows Azure, you must login to the Windows Azure Development Portal. You must have a Windows Azure account and corresponding Live ID credentials in order to proceed. If you do not, please see the sidebar “Requesting an Invitation Code for an Azure Account.”

Here are the steps for allocating Table Storage in Azure Storage:

- Login in to the Azure Services Developer Portal at https://lx.azure.microsoft.com/Cloud/Provisioning">https://lx.azure.microsoft.com/Cloud/Provisioning

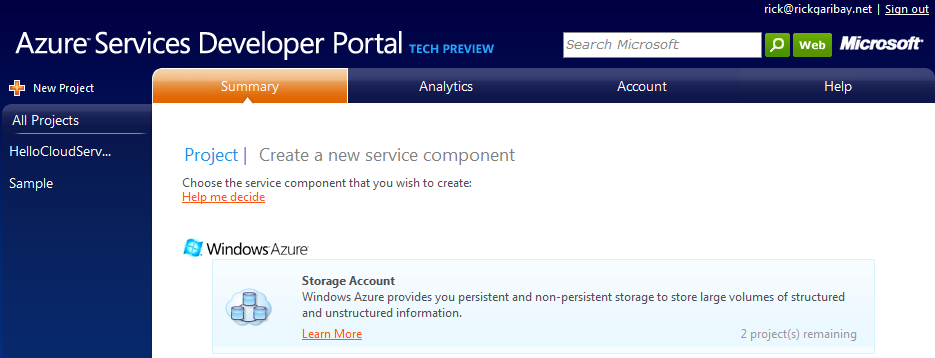

- Click the New Project link in the upper right corner. This will take you to the Create a new service component screen. Under Windows Azure, click on the Storage Account component as shown in Figure 8.

- On the Project Properties screen, enter a friendly description that will help you keep track of your project and click Next.

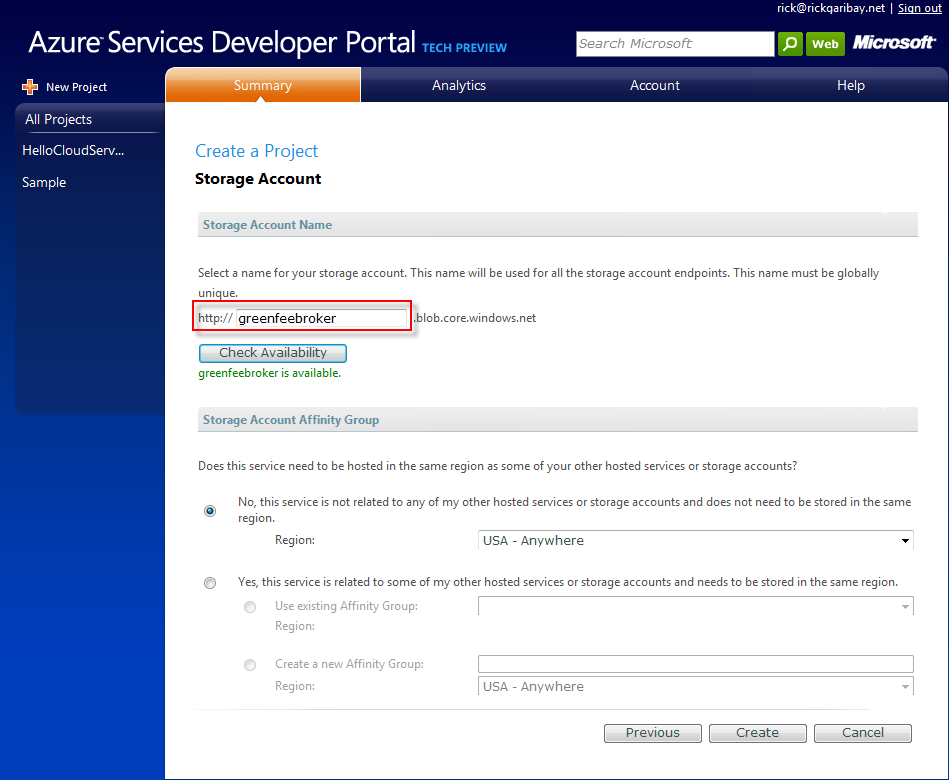

- On the Create a Project screen, enter the account name you want to use. Note that this name will be used to name your storage account as well as access the Table Storage service, so it must be unique. To that end, click Check Availability to verify that the account name is unique or simply click Create, accepting the defaults as shown in Figure 9.

At this point, you have created an Azure Table Storage account. In my case, I named it GreenFeeBroker, just as I named my database in the local Development Storage. This will be one less configuration change I’ll need to make when I get ready to deploy the Green Fee Broker application to Windows Azure.

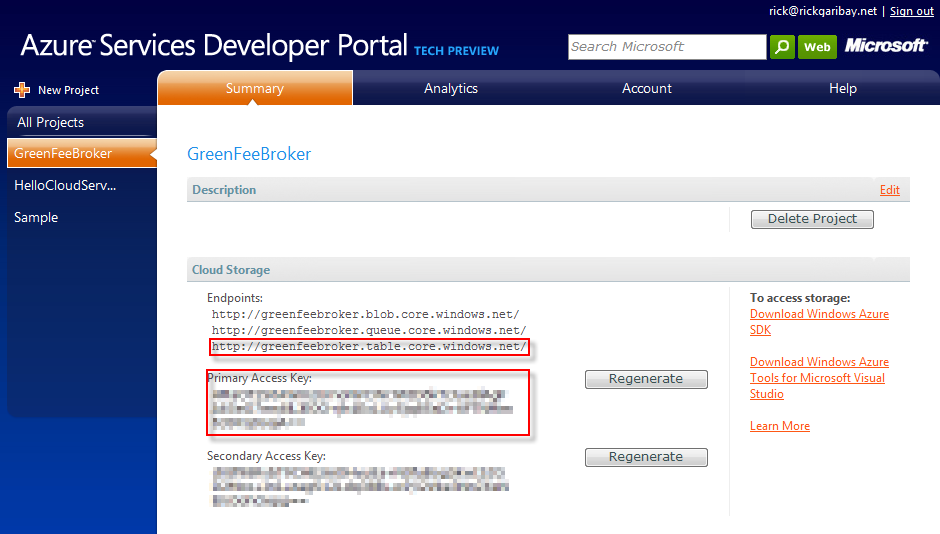

Figure 10 shows the project and corresponding project properties. Note two very important pieces of information. The URI for accessing your Azure Table Storage account and your Primary Access Key for authenticating and being authorized by the Table Storage service. The first thing you might want to do is paste the URI in your favorite browser and test it. Unfortunately, this won’t work because there is not an easy way to provide the authentication key which is stored in an HTTP header. You can, however, use Fiddler, a very useful HTTP sniffer for submitting and monitoring HTTP requests. See the sidebar “Using Fiddler to Test Table Storage” for an excellent step-by-step guide by Rob Bagby.

With your Azure Table Storage account ready, it is time to create the Courses table. Because the Azure Table Storage account is exposed via a REST/ADO.NET Data Service endpoint, the next step is to create the Courses resource. There is a decently documented REST API in the Azure SDK for doing this, but the easiest way to do this is again to use the StorageClient API. The trick is that you only want to create the table once, and since today, there is no designer or management support for doing so, you must create the table programmatically.

I could go off and write a little utility to do this, but I really like the idea of a SOA application being able to bootstrap itself. To bootstrap GreenFeeBroker to provision its own table(s) on the fly, I’ve added a call to the TableStorageServiceAgent called ProvisionTable within the RegisterCourse method, which is exposed as a WCF service operation. Listing 6 contains the ProvisionTable method within the TableStorageServiceAgent class.

Note that this will only work when the Green Fee Broker service application is configured for the Azure Storage Table Storage URI. In other words, the dynamic creation of tables is only supported in Azure Storage, not in Development Storage.

CTP Hack Alert!

There is likely a more elegant way to approach this, but for the purposes of this article, I’ve simply added an #IF DEBUG directive to the RegisterCourse method. Since I will always be working locally in Debug mode, the only time I need to execute the method is when running in Windows Azure, in which case I should be in Release mode.

Testing in the Azure Development Fabric

Any sound application lifecycle includes testing in the local development environment. Windows Azure provides great support for ALM by providing a Windows Azure simulated environment for local development, support for pushing the application to a staging environment in Windows Azure for full integration and performance testing, and finally going live in production.

Configuring and Starting Development Storage

With the Course table created in the Development Storage Table Storage database, it is time to start the local Table Storage Service and configure it to use the GreenFeeBroker account/database you created earlier so that you can do some end-to-end functional testing of the Green Fee Broker application.

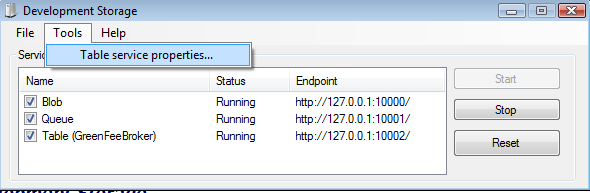

Find the Development Storage icon in the Windows Azure SDK program group (which you saw in Figure 7) and click it to launch Development Storage. When the Development Storage manager appears, you will notice service endpoints for blob, queue and table storage, which should transition from stopped, to starting to running. At this point, you must configure the table service endpoint to use the GreenFeeBroker database you created in SQL Server earlier. To do so, click Tools and select Table service properties. A dialog box appears that allows you to select from a list of databases. In this case you’ll select GreenFeeBroker, which is configured and running as shown in Figure 11.

Debbuging GreenFeeBroker in the Development Fabric

With Development Storage configured and running, it is now time to start the Development Fabric. As discussed briefly before, the Development Fabric emulates the Compute Service in the Azure Fabric providing the ability to verify a local deployment and conduct some initial testing.

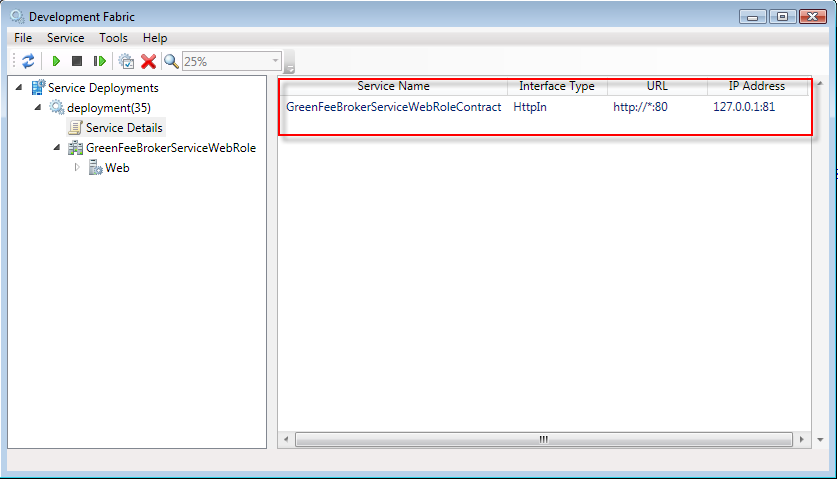

Hit F5 in Visual Studio to start debugging and pay attention to the lower left-hand corner of the IDE. You will see a series of statuses indicating that the application is being prepared, packaged and deployed to the local Development Fabric. In just a few moments, the Development Fabric console will launch. On the console, notice the tree which includes the current deployment, a service details icon and the GreenFeeBrokerServiceWebRole deployment. Click on the Service Details icon and notice the properties as shown in Figure 12. These properties correspond to the service properties in the ServiceDefinition.csdef file within the GreenFeeBrokerServiceWebRole Cloud Service project, namely the endpoint type, protocol, port and local address.

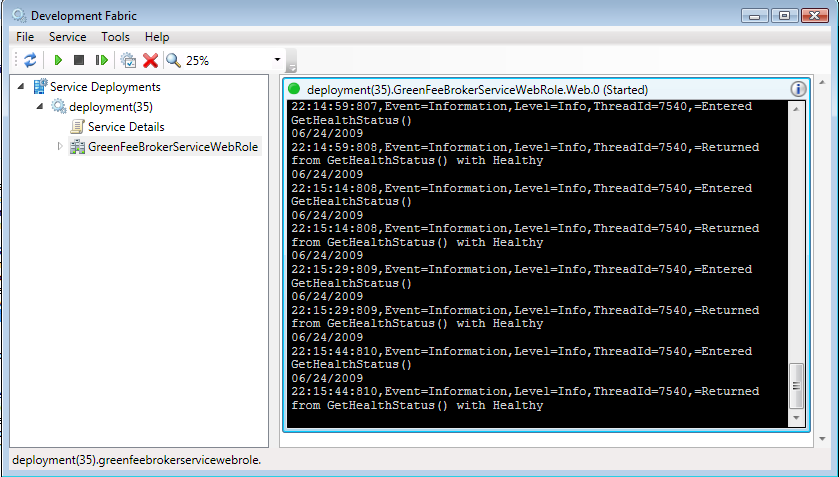

Next, click the GreenFeeBrokerServiceWebRole icon and notice the embedded console window. Click on the “I” icon in the upper right-hand corner to expand it. As shown in Figure 13, the console includes log entries representing the heartbeats being sent to the Development Fabric by the local agent emulator. Pretty cool, huh?

Generating the Proxy

Now that Green Fee Broker has been hosted and is running in the Development Fabric, let’s test the service to make sure all is well.

CTP Hack Alert!

In the current version of Windows Azure, the Web Role does not support WSDL/MEX endpoints. The reason for this is that when a WCF hosted service is started, the default behavior is to assume that the MEX endpoint is that of the machine hosting the service. Because your application is deployed across several VMs on different physical machines that are fronted by load balancers, the service reports an internal port which is not accessible outside of the Windows Azure cloud because the only endpoint exposed publically is that of Virtual IP Address (VIP) assigned to a load balancer.

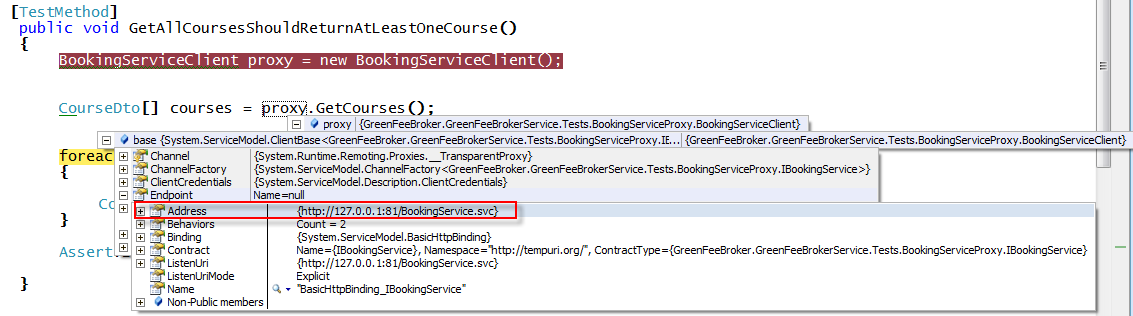

The workaround is simple. Publish the WCF Service (in this case the GreenFeeBroker.GreenFeeBrokerService.Services project) to IIS, and use this endpoint to generate the proxy. Then, in the client configuration, change the address to http://127.0.0.1:81/BrokerService.svc.

Updating Configuration

For the smoke test, I’ll use a unit test as an integration test. The app.config for the test project must contain the WCF client configuration which will be generated once the proxy is generated using the guidance above. As shown in Listing 7, make sure that you set the address attribute in the endpoint element to http://127.0.0.1:81/BrokerService.svc.

Now I’ll turn your attention to the GreenFeeBrokerServiceWebRole Cloud Service project. As I discussed earlier, a Cloud Service project contains two important configuration files: ServiceConfiguration.cscfg and ServiceDefinition.csdef. Listings 4 and 5 show the configuration for each respective file. While the ServiceDefinition.csdef file will remain static for the rest of this article, you will toggle settings in the ServiceConfiguration.cscfg file to support both local development and Windows Azure configurations.

Testing Green Fee Broker in the Development Fabric

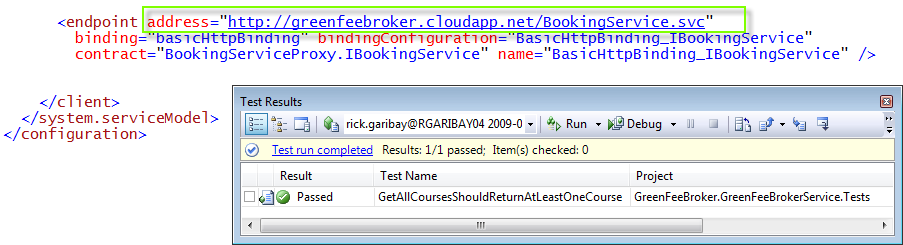

In Visual Studio click on Debug, and select Start without Debugging or simply press Ctrl+F5. The application will once again be deployed to the Development Fabric. Locate the ClientHarnessTest unit test class file in the GreenFeeBroker.GreenFeeBrokerService.Tests project and run the GetAllCoursesShouldReturnAtLeastOneCourse method. As shown in Figure 14, if you break into the test method and inspect the proxy, you should see that the address is actually that of the Development Fabric endpoint (not the local IIS version of the application that you used to generate the proxy).

Now, run the InsertCourseShouldReturnMinusOne test which will create a new Course entity with the properties defined in the Course class.

If all is well, both tests should pass.

Deploying GreenFeeBroker in 5 Easy Steps

With local testing complete, it’s time to prepare your WCF application for deployment to Windows Azure. This process is incredibly straightforward and will likely get even easier as the technology matures.

Ready? Let’s go!

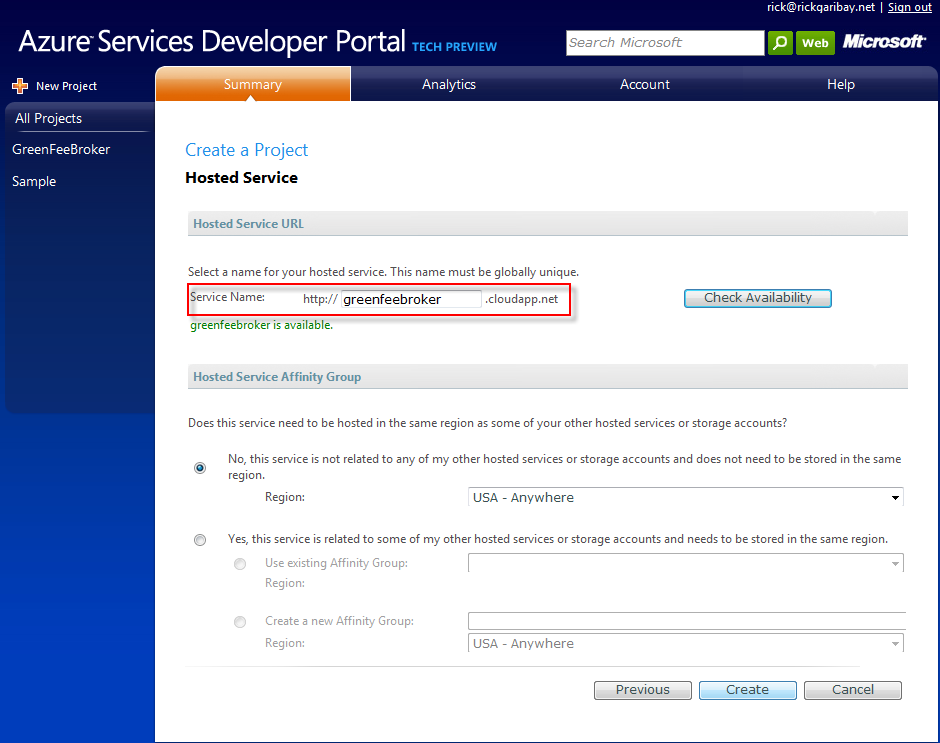

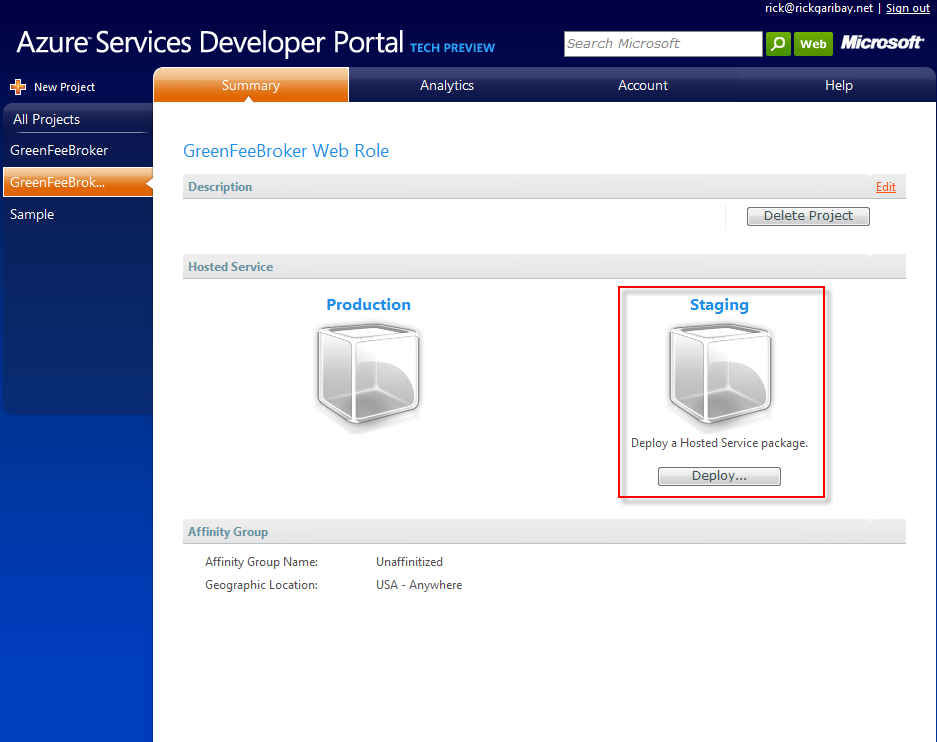

Step 1: Creating an Application in the Windows Azure Developer Portal

Just like with Table Storage, the first thing you need to do is provision a Compute Service Web Role that will host your application. To do this, log in to the Azure Services Developer Portal and click on New Project. This time, select the Hosted Services component under Windows Azure. Again, provide a friendly project label. In this case, I’ll choose the GreenFeeBroker Web Role to differentiate from the Storage component I created earlier. Click Next, and on the Create a Project page, enter a unique name for your application. (In this case, I’ve chosen GreenFeeBroker as shown in Figure 15) Click “Create”. A Compute Service account is provisioned and the Green Fee Broker Web Role project is ready for deployment!

Step 2: Updating the ServiceConfiguration.cscfg File for Windows Azure

It’s time to update the service configuration for the big time.

Open the ServiceConfiguration.csfg file and change all of the settings to use the Azure Storage settings. As shown in Listing 4, I’ve simply added configuration for both local and Azure so that I can easily toggle them. Keep in mind that this will only work with your own application and key-you can’t use mine. Notice, however, that in addition to different keys and endpoint URIs, the UsePathStyleUris property is set to false for the Azure configuration. For reasons I won’t get into here, you must set the UsePathStyleUris property to false if you want your application to work in Azure Storage.

You must set the UsePathStyleUris property to false if you want your application to work in Azure Storage.

Step 3: Creating a Deployment Package for Windows Azure

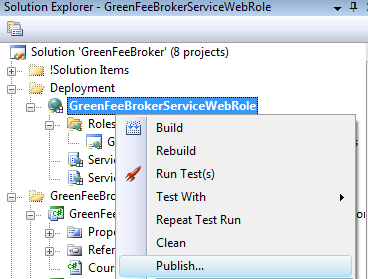

In order for Windows Azure to host your application, you have to deploy it, and it expects a deployment package to be configured just right. The Azure SDK ships with a utility called CsPack.exe that will do this for you, but in this case, it is far easier to just let Visual Studio do the work.

Right-click on the GreenFeeBrokerServiceWebRole Cloud Service project and then click Publish as shown in Figure 16. Within moments, a Windows Explorer instance will appear providing you with the location of the package and configuration file that Visual Studio just created for you. Copy the path to the folder to your clipboard-you will need it for the next step.

Step 4: Deploying Green Fee Broker to Windows Azure

Now, go back to the Green Fee Broker Web Role project in the Azure Services Developer Portal, and under Staging, click the Deploy button as shown in Figure 17. Next, select the GreenFeeBrokerServiceWebRole.cspkg under App Package and select ServiceConfiguration.cscfg under the Configuration Settings (if you forgot to copy the file path to the deployment package earlier, just browse to the same location in Windows Explorer that Visual Studio created the package for you). Finally, provide a friendly label and click Deploy.

Notice that the Staging URL is a GUID which is not human friendly. This is because the staging environment is intended for testing purposes only.

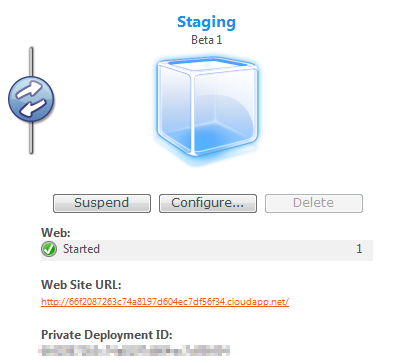

Step 5: Initializing and Starting Green Fee Broker

Now, under Staging, notice that a deployment label is provided to help you keep track of the version you are dealing with. Click Run. The state of the application will change to Initializing. Currently, this process takes a little bit of time, about three minutes. I suspect this is due to the process of initializing the VM(s) that will host the application. Be patient, once initialization is complete the status will change to Started as shown in Figure 18.

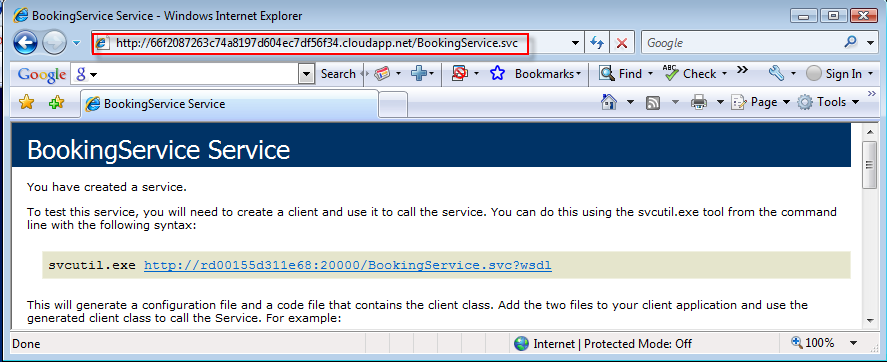

This is a great time to do some more testing. Click on the URL and append BrokerService.svc to the URL. You should see a familiar WCF hosting screen as shown in Figure 19. Notice that the Staging URL is a GUID which is not human friendly. This is because the staging environment is intended for testing purposes only. It is provided to allow for pre-production testing.

At this point, update the address in the client endpoint element in the App.config for the unit tests to the staging URL provided on the portal. Since you tested in the local Development Fabric, the test should pass.

Now, confident that you are ready to preview your little slice of functionality for the world, click on the circle in the middle of the vertical bar between Staging and Production to promote your application from Staging to Production. You will be asked if you are sure you want to promote your application. Click Yes.

Within moments, your application will be deployed to Production, setting the VIP for the load balancer to respond to requests from the production URL (in my case http://greenfeebroker.cloudapp.net/BrokerService.svc">http://greenfeebroker.cloudapp.net/BrokerService.svc).

Update the address in the client endpoint element in the App.config for the ClientHarnessTests unit test file to http://greenfeebroker.cloudapp.net/BrokerService.svc">http://greenfeebroker.cloudapp.net/BrokerService.svc.

Sound testing practices preclude surprises, and as expected the Green Fee Broker application runs in Production without issue. As you can see in Figure 20, it just works!

What About Security?

Security is a very broad topic, yet eminently important to the successful adoption of any cloud computing hosting environment.

Though not addressed in this article, from an authentication and authorization perspective, you can continue to use role-based security frameworks as provided by ASP.NET, or you may be interested in claims-based, federated security provided by the .NET Services Geneva Framework.

In addition, privacy and integrity concerns are extremely important when deploying applications across the Internet and many of the same mechanisms you use today will apply in Windows Azure.

You can be sure that Microsoft is taking security very seriously and you can expect more detailed information about supported security scenarios to be publically available soon.

Conclusion

The Azure Services Platform is big. Very big. As shown in Figure 21, Windows Azure both forms the bedrock for the Azure Services Platform and provides a foundation on which to build applications for the cloud using many of the tools, languages and technology that you are already familiar with.

In this article, I’ve provided a very broad and early look at Windows Azure along with a step-by-step guide for getting your .NET applications up and running. I touched on some of the new patterns and considerations that must be addressed when designing an application for Windows Azure, and at the same time, demonstrated that the same patterns and practices you are using today will go a long way in forming a solid foundation for your Windows Azure application.

I believe that Windows Azure is an important signal that the Windows platform has matured tremendously over the last decade and that always on computing is just around the corner. While significant for organizations of all sizes, cloud computing will not replace, but rather complement enterprise computing and will bring a platform for delivering Internet-scale software + services capabilities within the reach of all developers, which is very exciting indeed.