Now that I have your attention, what does the word “bots” remind you of? Does it remind you of things such as natural language recognition? Artificial Intelligence? Computers stealing our jobs? Singularity, World War X, becoming pets to our computer overlords, the end of humanity as you know it?

Calm down! Bots are nothing like that. And no, the computer didn't bribe me with a piece of cheese to say this.

As computers have grown in complexity, our way of interacting with the computer has also changed. We used to interact using printouts and punch cards. Then some genius invented a CRT screen with great contrast ratio, and we had a terminal and a command prompt. How convenient was that; I could type in my commands using a text-based interface, and the computer responded. No more shuffling around those cards. The problem was remembering all those commands, so the developers of complex programs came up with menu-driven interfaces. You started the program and it presented you with a number of options to pick from. You picked an option and went to another screen full of options. Those were the days. Then came the GUI. The mouse was a particularly amazing invention. You could drag-and-drop things, and open and close windows. Most recently, we have touch screens. Who needs a mouse when you have ten styli attached to your hands, right?

Bots are simply the next evolution in how we interact with a computer.

A bot is an app that lets users interact with the app in a conversational way. The Microsoft Bot Framework is a framework that helps you write bots.

Conversational User Interface

Yes, I'm sorry to puncture your enthusiasm; this article won't mean World War X and the end of humanity. I'm simply describing a new way of interacting with a computer: through conversations.

You, the human, interact with a program via a conversation. Let's say that you start with “Hello.”

The bot receives this message and can interpret your intent using numerous mechanisms.

- It can try to do an exact match; this is nothing but simple string matching.

- It can try to recognize patterns; for instance, if the user's input starts with “Hello,” this must be the start of a conversation.

- It may use regex to do more complex pattern recognition.

- Or it may use LUIS (Language Understanding Intelligent Service), where the app literally tries to understand the user's intent. For instance, “What is the weather outside,” or “Do I need an umbrella,” or “How cold is it outside,” all mean the same thing, as far as the bot's concerned.

Once the bot (your program), understands the user's intent, it can respond with a new set of questions, possible actions, or results. Results could be speech, text, a list, or anything similar.

The good news is that you don't need to be an AI expert to start writing your first bot. In fact, to understand the nuts and bolts of how the Bot Framework works, you need to know nothing more than exact string matching. And the other pieces logically fit in to place.

To understand the nuts and bolts of how the Bot Framework works, you need to know nothing more than exact string matching.

Before I dive into code and a functional bot, there are some important concepts to understand.

Good Bot Design

Users have many ways of getting the information they need, but they have very little time or attention. When they want to know about the weather, they could go to a website, pick up a phone, or say “hey Siri.” They'll probably pick the route that's the least effort and still gets the answer they need.

Keep this in mind when designing your bot. For starters, you might make a single catch-all bot, the kind that will end the human race. That's probably not a good idea. Your bot should be specific to a function, and even within that function, it should narrow down the choices and the user's intent to something very specific. Here are some guidelines:

- Don't try to make the bot too smart. A bot that tries to do too much is not the best candidate because it will fail to recognize the user's intent. For instance, if you said, “search for Sahil,” should it return a YouTube video or a GitHub repo? How about, “search for the last thing Sahil published.” What exactly are you talking about here?

- Don't try to be cute! People use bots that are efficient and to the point. Don't pretend to be human, you aren't good at it. Jokes can be annoying, especially if they get in the way of getting things done. When I ask for weather, tell me, “It's 55F outside.” There's no need to follow that up with a cute rejoinder.

- Voice and natural language recognition aren't quite there yet. Don't get me wrong; they can be quite effective. For instance, when the user says a phrase, you could use Microsoft cognitive services to recognize the user and perhaps load their non-secure user profile. It needs to be non-secure because the voice could be recorded. However, when I say, “recognize speech,” how are you sure that I wasn't saying “wreck a nice beach?” The reality is that we humans are very good at voice recognition because we pair the meaning with context. So first establish context, and then try using these fancy tools. And ask any married couple, even with context, we humans sometimes mess up understanding each other. Surely the bots can't be perfect either. Plan for imperfection.

- Keep it simple and expose it to as many channels as possible. The Microsoft Bot Framework can easily allow you to expose your

botfunctionality across numerous channels, such as Skype, SMS, email, Microsoft Teams, Facebook, and more. - Narrow the problem down, starting with your welcome message. For instance, imagine a bot that responds to my hello with “Hello Sahil,” versus another bot that says “Hello Sahil. I can help you 1) Book an Air Ticket, 2) Book a hotel, 3) Book a car. Which would you like to do?” Which of the two do you think leaves me less confused?

- Break down your complex problems into simpler steps: these steps are your dialogs. For instance, the process of booking an air ticket could first start by asking “When are you leaving,” followed by “Where are you leaving from,” etc. You're effectively stepping through dialog after dialog, only in a conversation.

- Recognize that humans handle and expect exceptions. For instance, right in the middle of my conversation, the human could say, “can you check my calendar for me?” This means that your programming paradigm needs to support:

- Start a new conversation.

- Replace the current conversation.

- Add to the existing conversation.

As you can see, it boils down to a lot of common sense. If I had to give you three tenets, they would be:

- Keep it simple; narrow down the problem.

- Don't be annoying.

- Be useful; get to the information that the user needs fast.

We're still in the infancy of bot design and a lot of best practices are still emerging. We're still not sure whether we find Siri's jokes annoying or useful. Luckily, the Bot Builder SDK and the Microsoft Bot Framework encompass concepts that let you model a bot. You could still design a bad bot, but then, you could, I hope, design a good bot too. Let's get a little bit more technical now.

The Bot Builder SDK

The BOT builder SDK from Microsoft allows you to build bots using the Bot Framework. It models all the concepts necessary, such as dialogs, channels, state, interaction with LUIS etc., to help you build compelling bots. Your bot may or may not be hosted in Azure, but hosting it in Azure is easier. Currently the Bot Builder SDK is available for .NET as a NuGet package and for NodeJS as an NPM module, or, you can simply interact with it using REST APIs. There may be additional NuGet packages or node modules specific to channels.

Dialogs

Typically, you organize your interaction with a bot using conversations. These conversations are modelled as one or more dialogs. Dialogs contain prompts and waterfall steps, which is simply a number of sequential dialogs that the user goes through in logical progression, and at the end of that progression, the “waterfall” returns the entire resultset.

Let's look at a conversation organized into dialogs.

- Usually, you start with a default dialog. For instance, “Hello Sahil; how may I help you?”

- Based on some trigger, such as a word match like “Help,” you may jump to an alternative dialog. For instance, “Help, I'm lost,” to which the bot can respond, “Sahil, I can help you with, 1) Book a flight, 2) Book a hotel room, 3) Book a car?”

- One dialog can redirect to another dialog. This redirection may be a logical “next” or it can be based on a condition. For instance, when using a default flow, “When are you flying out” may be followed by “Where are you flying to.” Based on a condition might be, “I want to book a hotel,” which might result in a dialog #2, which is about hotel reservations.

- One dialog can also redirect to another dialog based on an interruption or an action. When you jump based on interruptions and start a new dialog flow, you may resume back with the original dialog after the interruption is complete. For instance, in the middle of booking my travel plans, I may say, “When am I flying out?” which then may have the bot respond with “April 20th, 2018,” or “You have three flights in the next two months; which one would you like me to read out?” What's important here is that you want to remember the current dialog chain, because after the user knows when they're flying out, they'll want to return to the original dialog flow.

- Sometimes the redirection may cause the entire dialog redirection chain to start over, for instance, if the user says, “I'm confused, let's start over.”

- Dialogs usually follow a waterfall pattern, which guides the user through a series of steps or prompts.

Each one of these concepts - a dialog, a trigger, a dialog stack, a waterfall, actions/interruptions, replace dialog, and resume dialog - are modelled in the Bot Builder SDK.

State

Bots run as a Web service. This Web service is stateless. It could run over many nodes, and it might even be server-less. It could be something as simple as an Azure function. So the bot itself doesn't store any state; however, no self-respecting program - or should I say bot - could function without state. Like most newer applications, state is externalized and your program is stateless. State in the Bot Builder SDK is based on a provider model, and out of the box, it supports three kinds of state:

- In-memory storage: This is great for development purposes, but remember, because it's “in memory,” this kind of bot won't scale across multiple nodes.

- Azure Table storage

- Azure Cosmos DB

You can also author a custom state provider using the Bot Builder SDK Azure Extensions (https://github.com/Microsoft/BotBuilder-Azure). The good news is that no matter what state provider you choose, as long as you stick with the well-defined API, your program doesn't change. The state provider is simply plug-and-play. It's important that you stick with the various properties and methods that the Bot Builder SDK exposes, and not try to reinvent the wheel.

The Bot Builder SDK for NodeJS session object exposed the following properties for state data:

- userData: The state that's scoped to the user and is accessible over multiple conversations. For instance, if I asked to book a flight, userData is a great place to store this information because in a subsequent conversation, I could easily change the flight.

- privateConversationData: This state is for the user in this particular conversation. For instance, if I'm booking a flight and I say “forget about it,” I could simply call

session.endConversation, and forget everything specific to me in this current conversation with the bot. - conversationData: This is specific to a conversation, but is shared among all users in the conversation. However, this state is also cleared when the conversation is ended with the endConversation command. For instance, in a Microsoft Team's chat where multiple users are querying about a JIRA issue, clearing the conversation should effectively reset the state. For instance, three developers and one bot enter into a conversation. Developer 1 says, “open JIRA issue 331.” The bot responds with, “JIRA issue 331: modify styles to reflect updated branding, is now open.” Developer 2 can then ask, “What Git branches were created for this issue,” etc. This could continue until a new issue is opened, which opens a new conversation. What's important is that in the second message where Developer 2 says “this issue,” the bot clearly understands that “this” means JIRA issue 331, which is stored in conversationData.

- dialogData: This contains data specific to the current dialog only.

The Bot Builder SDK for .NET exposes similar capabilities via Getter and Setter methods. The method names and properties may be different, but the concepts are the same.

Channels

Bots are, put simply, programs that you interact with via conversations. And just as we humans can have conversations on many mediums, or let's say channels, bots can interact via multiple channels. Examples of channels are a simple website that hosts a chat-based channel, like Skype or Cortana. As you may have guessed, Cortana may have some peculiarities, such as speech. Microsoft Teams is yet another channel, which introduces its own unique set of peculiarities and features. For instance, a Teams-based bot can do 1:1 conversations or participate in a group chat.

It can also send notifications to a user. Imagine if I had a helper bot that I could tell, "@documentWatcher, please alert me when a new document is created with the phrase “pay raise for Sahil.” As you may have guessed, this action that I specified to my Microsoft Team's bot is handled by a Web service. This Web service is nothing but an app, as far as Microsoft Graph is concerned. Just like any app, it has permissions. Based on those permissions, it could execute a search and keep watching for documents matching my specified phrase. And when such a phrase match occurs, it can notify me, which is simply sending a message to the user.

The phrase match doesn't even have to be exact; using LUIS, you can add natural language intelligence, even in the matched documents. For instance, “Should we give Sahil more money” is somewhat close to “Give Sahil a pay raise.” I should know about that too, right? What about “Should we fire Sahil?” Yes, with natural language recognition, I could write logic around that too. It's quite incredible. I'm sure you're beginning to see the value and power of mixing bots in your various channels already.

Developing and Testing Your Bot

There's one last thing you need to know before you dive into code, and that's how you go about developing and testing your bot. As I mentioned, the bot is just a Web service. But it's a Web service that the surface you're testing, be it Microsoft Teams, a Web-hosted chat, or something else, should be able to communicate with. There are three options here.

The first and the simplest option is to use a cross-platform app written in Electron that's called as the Bot Framework emulator. You can download this emulator from https://github.com/Microsoft/BotFramework-Emulator. This emulator allows you to write and test your bot without exposing anything to the Internet.

The other two options require exposing your development endpoint to the Internet. Although this may sound scary, the reality is that in this cloud-friendly world we live in, Azure-based services frequently need to talk to dev endpoints. Naturally, easier solutions have emerged to this problem. My favorite is ngrok, a simple exe that exposes your dev endpoint to an HTTP or HTTPS URL on the Internet that ends in ngrok.io. The only consideration here is that the endpoint changes every time you run ngrok. Luckily, the bot registrations in Azure make it easy to change the endpoint.

The other option is to expose the endpoint by hosting it on the Internet. I like to use Azure websites because even though the endpoint is exposed to the Internet, I can still debug the code in dev mode. Yes, debugging an Azure site is slower than debugging something locally, so my preferred approach is ngrok. I just run it, minimize it, and forget about it. I can restart my Web service endpoint as many times as I wish. And if I have to update my endpoint URL in my Azure bot registration once a day, it's not that big a deal.

The Hello World Bot

So far, there's been lots of talk; it's time to see some code. Let's start by authoring a simple “Hello World” bot. I'll use NodeJS, but everything I show here can also be done using .NET.

To start with, like any other node-based project, create a package.json file. In this package.json file, add the code shown in Listing 1.

Listing 1: The Hello World Bot package.json

{

"name": "simplebot",

"version": "1.0.0",

"description": "",

"main": "index.js",

"scripts": {

"start": "node index.js"

},

"dependencies": {

"botbuilder": "^3.13.1",

"restify": "^6.3.4"

},

"devDependencies": {

"@types/restify":"^5.0.6"

},

"keywords": [],

"author": "",

"license": "ISC"

}

As can be seen in Listing 1, in addition to referencing the botbuilder npm package, I also use restify to expose it as a Web service. Also, the start command uses node to run index.js, the code for which can be seen in Listing 2.

Listing 2: Hello World Bot

const builder = require('botbuilder');

const restify = require('restify');

const connector = new builder.ChatConnector();

const bot = new builder.UniversalBot(

connector,

[

(session) => {

session.send('Hello world!');

}

]

).set('storage', new builder.MemoryBotStorage());

const server = restify.createServer();

server.post('/api/messages', connector.listen());

server.listen(3978);

Listing 2 shows the simplest bot you can make. No matter what message you send it, it replies with “Hello World.” As can be seen, I'm using builder.UniversalBot, which accepts two parameters. The first is the connector on which I wish to expose the bot. The second is an array of dialogs, of which I have only one. Usually these are functions, and the first argument for it is session. Session allows me to get a lot of valuable information. For instance, what the user said last can also be grabbed off of the session object. I'm keeping it simple and simply replying with “Hello World” no matter what the user said.

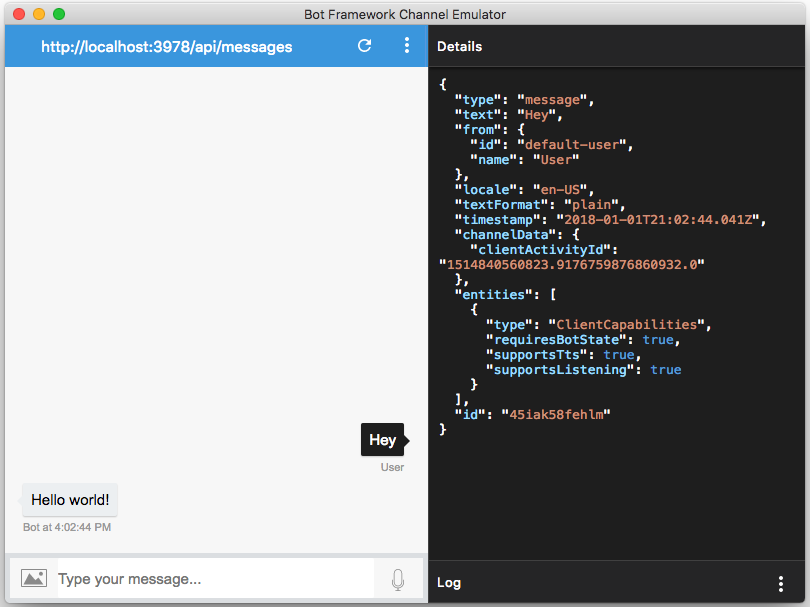

Let's test the bot next. Download the Bot Framework emulator and run it. Also in your project, run npm install and npm start. Once your project is running, switch over to the Bot Framework emulator, and connect to http://localhost:3978/api/messages. You don't need a Microsoft App ID or App Password yet; these become relevant once you start deploying the app in Azure or using it in connectors.

Once connected, go ahead and type any message to your bot; you should see the bot respond with “Hello World!” as shown in Figure 1. You can also see in Figure 1 that I can click on any message and see the details of that message as JSON in the right-hand pane.

Writing a Bot for Microsoft Teams

The great thing about the Bot Framework is that one bot can be exposed to multiple channels. One such channel is T. But what fun is it to expose a simple Hello World bot to T? Well, the fact that you're running inside Microsoft T should pose some interesting opportunities.

A Microsoft Teams bot can:

- Have 1:1 chats, where the user messages the bot just like they would message another user.

- Have chats inside a channel, so the bot becomes a member of the Team, and multiple users can talk to the bot.

- Notify a user by @mentioning them or sending them a notification

- Fully interact inside the Teams “context.” It can do things like get the user's profile, get a list of channels in a Team, get members of a Team, etc.

- Be granted permissions in Microsoft Graph just like you'd grant permissions to any other Graph application. This means that the bot can act as a graph application, and query for things such as mail, calendar, documents, and much more.

An Important Note

At the time of writing this article, there are two ways to register a bot. You can go to https://dev.botframework.com/ and click “Create a bot or skill,” which is the equivalent of logging into your Azure portal and provisioning a new bot. Or you can go to https://dev.botframework.com/bots/new. When you use the second link, you get the option of migrating the bot to Azure. Down the road, we'll most likely create bots only in the Azure portal. Right now, Teams bots are more reliable if you create them directly in the https://dev.botframework.com/bots/new URL. I've noticed strange behavior, such as a newly registered bot in Azure being responsive on every channel except Teams. I'm sure these growing pains and kinks will be sorted out before Azure is the only way to register bots. But until then, I'd suggest creating them using the https://dev.botframework.com/bots/new URL. I'll mention both Azure and non-Azure instructions below.

Registering a New Bot for Microsoft Teams

Visit https://dev.botframework.com/bots/new, and sign in with your Office 365 credentials. If you intend to create the bot using the dev.botframework.com URL, you'll still sign in using your Office 365 credentials, but this credential must also have an Azure subscription associated with it.

Registering a bot is a matter of filling out a simple form. You need to specify things like the display name, the @botHandle, a description, etc. The interesting thing is the messaging endpoint. This messaging endpoint is what you will expose to the Internet either via ngrok or an Azure website. You haven't exposed anything yet, so just type https://something there for now.

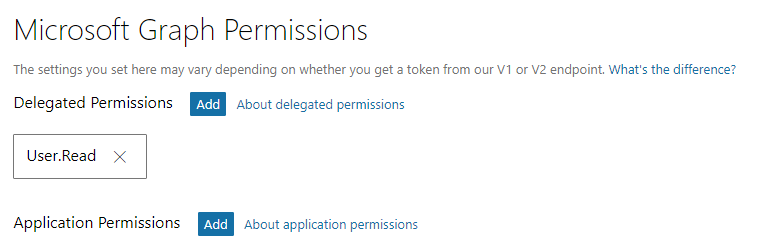

You'll also need to generate a Microsoft AppID and Password. Behind the scenes, you're simply registering the bot as an app using the v2 app model. When you generate the AppID and Password, note it down somewhere. You'll need it shortly.

Also, under the “Microsoft Graph Permissions” section, grant it the “User.Read” permission, as shown in Figure 2.

Configure Channels for Your Bot

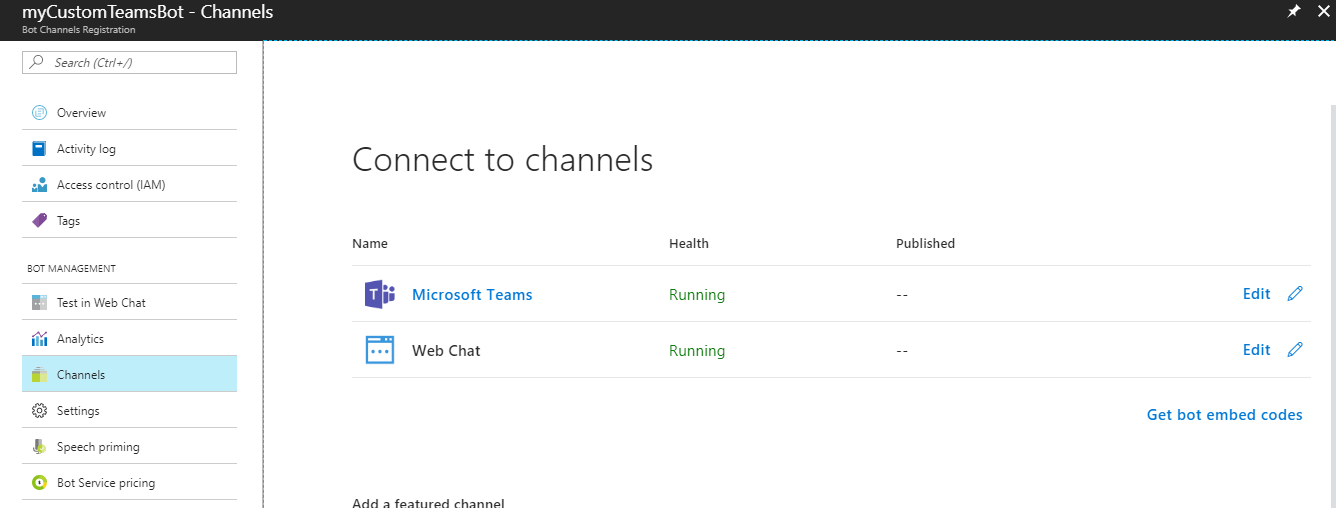

Here, the instructions become different based on whether this is a bot you registered using the old URL directly in Microsoft Teams, or via Azure. Visit https://dev.botframework.com/bots to see the list of bots you've registered. Clicking on the bot should take you either to the Azure portal (if you use the Azure/new way of registering) or to a different page if you used the old way of registering.

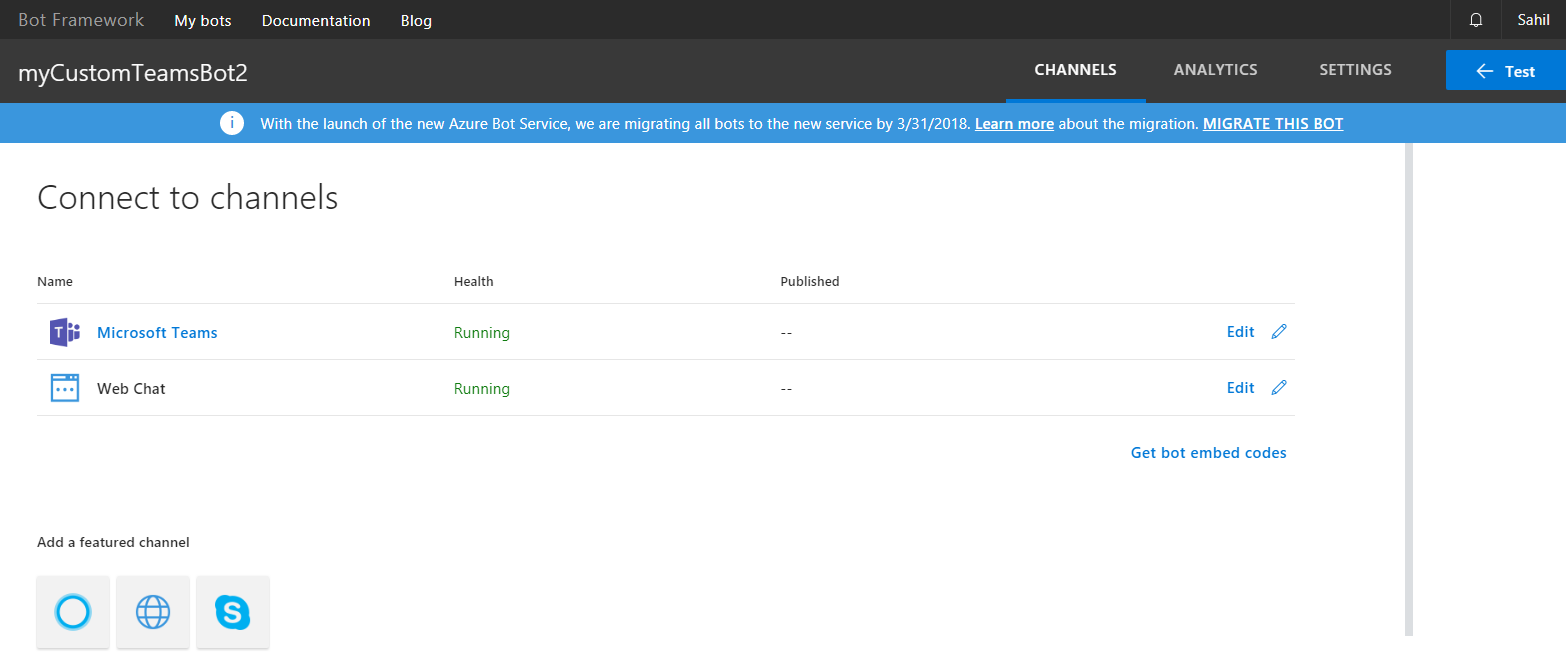

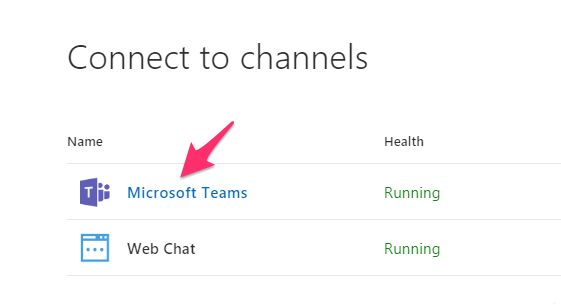

If you're in the Azure portal, look for “channels” and choose to add the “Microsoft Teams” channel, as can be seen in Figure 3. Alternatively, if you're using the old way of registering your bot, you'll be presented with a different user experience, as can be seen in Figure 4.

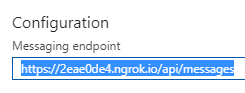

One last thing before we leave the bot registration page. Under the “Settings area” in the top navigation bar for the old registration portal, and left side navigation area under channels in the Azure portal, you'll see a place called “Configuration” and “messaging endpoint”. Just take a note of that; You'll need to modify the value there once you expose the bot on the Internet using ngrok.

Writing the Teams-ready Bot

In the nodejs-based project, remove the reference to botbuilder, and instead add a reference to botbuilder-teams. I'm using version 0.1.7. Note that botbuilder-teams include a reference to botbuilder, so there's no need to add it explicitly. There are no other changes in package.json.

Inside index.js, write the code as shown in Listing 3. As can be seen in Listing 3, the code is strangely familiar. It is, after all, a bot! But, this time around, I'm using dialogs. And the two dialogs I'm using are triggered based on a regex match. One is triggered when the user writes “getchannels” to the bot. The second is triggered when the user writes “aboutme” to the bot.

Listing 3: Our Teams-ready bot

const builder = require('botbuilder');

const builderTeams = require('botbuilder-teams');

const restify = require('restify');

var githubClient = require('./github-client.js');

const connector = new builderTeams.TeamsChatConnector(

{

appId: "removed",

appPassword: "removed"

});

const bot = new builder.UniversalBot(connector)

.set('storage', new builder.MemoryBotStorage());

const dialog = new builder.IntentDialog();

dialog.matches(/^getchannels/i, [

function (session, args, next) {

console.log(session);

var conversationId = session.message.address.conversation.id;

connector.fetchChannelList(

session.message.address.serviceUrl,

session.message.sourceEvent.team.id,

(err, result) => {

if (err) {

session.endDialog(JSON.stringify(err));

}

else {

session.send('Here are the matching channels');

session.endDialog(JSON.stringify(result));

}

}

);

}

]);

dialog.matches(/^aboutme/i, [

function (session, args, next) {

console.log(session);

var conversationId = session.message.address.conversation.id;

connector.fetchMembers(

session.message.address.serviceUrl,

conversationId,

(err, result) => {

if (err) {

session.endDialog(JSON.stringify(err));

}

else {

session.endDialog(JSON.stringify(result));

}

}

);

}

]);

bot.use(new builderTeams.StripBotAtMentions());

bot.dialog('/', dialog);

const server = restify.createServer();

server.post('/api/messages', connector.listen());

server.listen(3978);

It's very important to realize that the “aboutme” action can work in either a personal chat or in a group chat in a channel. However, getchannels requires you to provide a Team ID, and the only way to get the Team ID currently is from the session's context. In other words, in order to test “aboutme,” I can simply reference the bot in a personal chat. I don't need to package it up as a zip file and add it to Teams. To test it inside the context of a Team, I need to upload the bot into Teams as a zip file. I'll leave that for another day; for now, let's just see how to test the “aboutme” action.

Testing Your Bot

There are two things you need to do in order to test your bot.

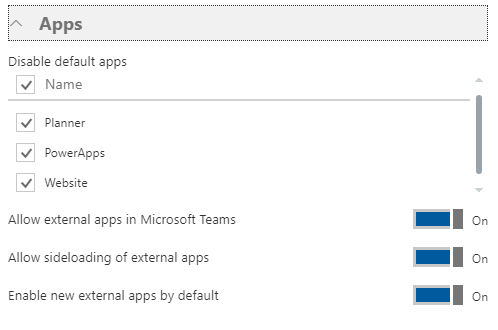

First, you need to enable sideloading of bots in Microsoft Teams. Log in as tenant administrator and go to the admin area. Under Settings\services and add-ins, look for Microsoft Teams. Ensure that sideloading of external apps is enabled under the Apps section, as shown in Figure 5.

It's worth mentioning that most administrators feel itchy turning this capability on. I advise you to do this in a developer tenant and not in the production tenant. I wish we could turn this capability on as a group-by-group basis, but we can't. Not today at least.

The second thing is to simply test the bot out.

Go ahead and run the bot. This is a matter of doing npm prune (to remove botbuilder), npm install (to add botbuilder-Teams), and npm start, to run the bot.

Once your bot's running, download ngrok from ngrok.io, and run ngrok http 3978, where 3978 is the port your bot runs on locally.

Ngrok should give you two URLs: an HTTP URL and an HTTPS URL. Essentially, ngrok exposes your port 3978 on the Internet via a duplex outbound TCP channel. If that doesn't impress your mom, I don't know what will.

Once you have this ngrok URL handy, go ahead and update it under the Messaging Endpoint area of your bot registration. Mine looks like Figure 6.

Now go ahead and launch Teams and @mention the GUID of your bot. This GUID is the app ID of your bot registration.

Alternatively, you can also launch Microsoft Teams by clicking on the link shown in Figure 7.

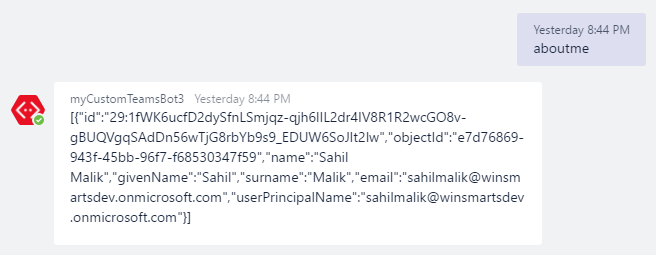

Type “aboutme” and you should see the bot respond as can be seen in Figure 8.

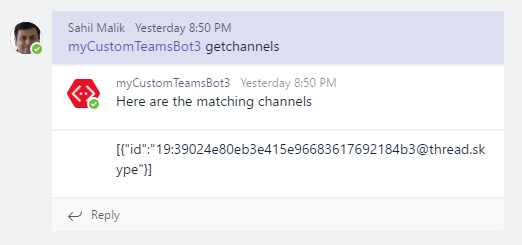

If you're inclined to do so, you can also package your bot as a zip file and sideload it into a Team. In the channel, you can talk to the bot directly using getchannels and you should see the bot respond with the list of channels in a specified Team. In my case, I had only one channel, as can be seen in Figure 9.

Summary

I'll share an interesting side note about myself. My education is both in computers/engineering and biology. I never wanted to be a doctor because I just couldn't get over all those long complex names, or dealing with disease and misery every day. One of my favorite topics in biology was genetics. It was quite mathematical, it involved calculating probabilities, and I always did well in that topic, while my fellow biology-inclined classmates were baffled by it. Another topic very near and dear to me neurology. There was a surprising amount of physics involved. I found it amazing that our spinal cords carry current, and that our neurons have voltage difference between the top and bottom. Incredible! I also found it amazing that when I touch a live wire, I have a reflex reaction where my brain doesn't participate in the decision making, because this is considered a life-threatening proposition. The time required for the brain to act is too long, so the neurons make a decision. The time required is about the same as an electric impulse to travel the distance of about a meter, the distance between my fingertip and my head.

Our bodies are walking and talking computers! They're capable of conversations, touch, emotion, love, hunger, and so much more.

My computer-oriented head led me to think that computers are essentially very simple brains. Note that when I was learning about neurons, the computer I had access to didn't have a hard disk and it had an 8-bit processor. But even today, computers can be considered simple. They definitely produce computation and not intelligence. But, they have the same components - thinking/processing, data collection, learning experience, just as we do. As a system becomes more intelligent, it inherently also becomes a bit more unreliable. Isn't intelligence merely a higher level of computation?

A very long time ago, I wrote an article for CODE Magazine (http://www.codemag.com/article/0807031), I'll repeat from the section under “Trim the UI” here:

"I've laid out the following items in order of complexity.

- Light Bulb: A light bulb is rather simple. You flick a switch and 99.999% of the time, it turns on.

- Dog: You pet a dog and it wags its tail. Sometimes it may bite.

- SharePoint: More on this shortly.

- Men: It's not that flicking the light switch should turn the bulb on, but first we must question, is light truly what we need? Let's call a meeting.

- Women: Well, I'm a man, so I can't understand them myself, much less explain them to you.

SharePoint, as you can see, is more complex than a dog, but less complex than a human. Isn't that what most computer software is becoming anyway? You flick a switch, and the bulb turns on-most of the time."

I think, we're entering a phase where computers are becoming smarter than a light bulb, maybe even smarter than your dog, but not yet as smart as humans!

What's important is that computers will be available in a range of intelligences, and it's up to us to choose how to apply them to our needs. There could be simple computers whose only job will be to reliably inform us of a water leak or an intruder. Or there could be more complex computers that could drive a car, tell a joke, paint a painting, or compose a poem. Yes, I do feel that computers will do all this one day and it will improve our lives.

We'll be like the most pampered pet of our computer overlords. It's a good thing I'm cute. I wonder what your game plan is.

For now, we're far away from that day, so let's learn the Bot Framework and a number of other interesting things

Happy coding.